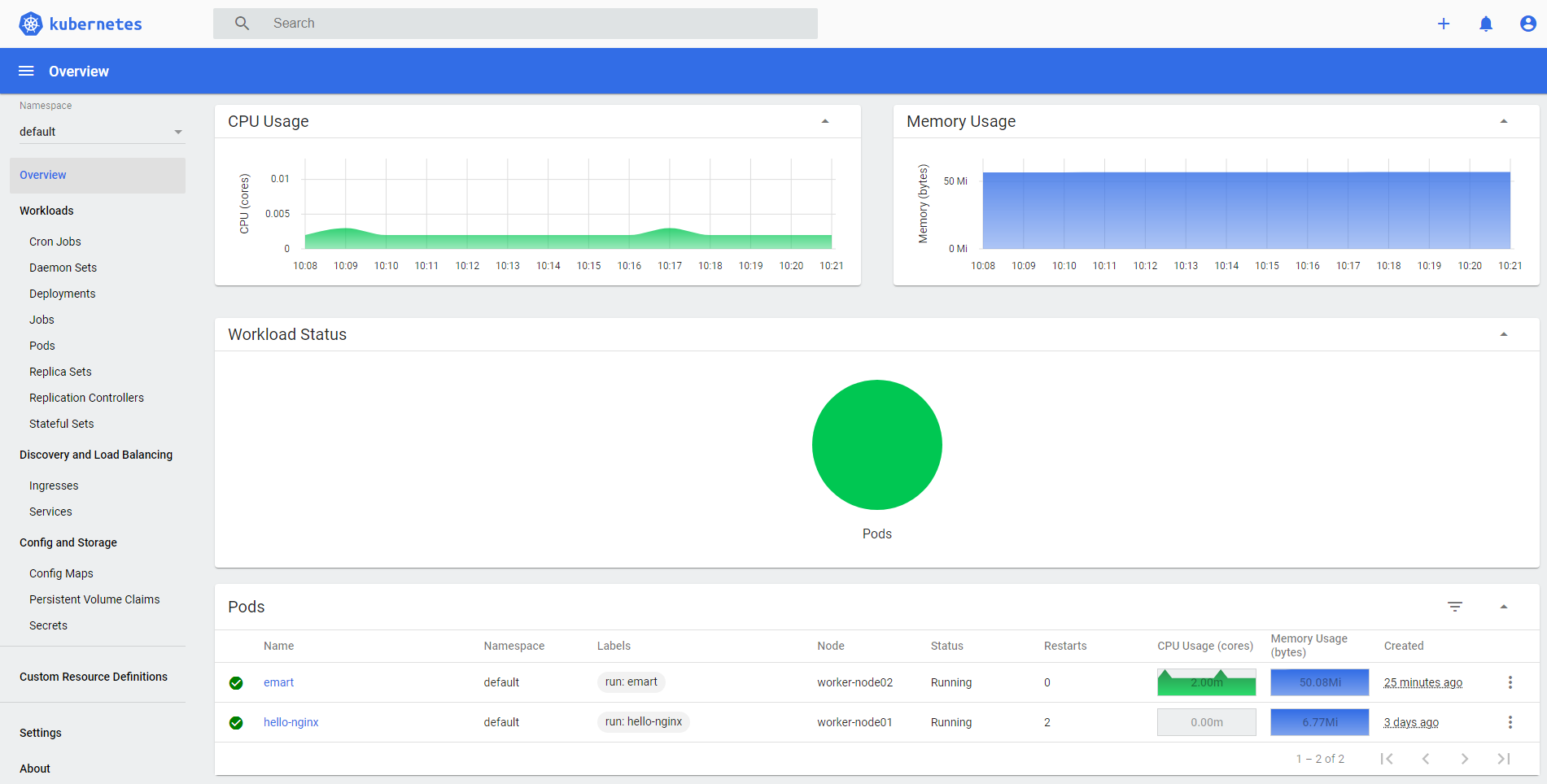

Kubernetes has a good one for poking/prodding around. With the two worker nodes/agents joined up to the cluster, you now have a full on Kubernetes cluster up and running! Wait a few minutes, then log into the server and run kubectl get nodes to verify they are present and active (status = Ready): kubectl get nodes Kubernetes DashboardĮveryone likes a dashboard. # Īnsible-playbook -i ansible-hosts.txt ansible-join-workers.yml # this has to do with nodes having different internal/external/mgmt IPs

– name: hold kubernetes binary versions (prevent from being updated) # it is usually recommended to specify which version you want to install – name: Adding apt repository for Kubernetes – name: Add an apt signing key for Kubernetes – name: verify docker installed, enabled, and started – name: Install docker and its dependecies – name: Add apt repository for stable version – name: Add an apt signing key for Docker – name: Install packages that allow apt to be used over HTTPS – hosts: all # run on the “all” hosts category from ansible-hosts.txt yml files define what tasks/operations to run ansible-install-kubernetes-dependencies.yml: # Gets apt ready to install things, adding the Docker & Kubernetes signing key, installing Docker and Kubernetes, disabling swap, and adding the ubuntu user to the Docker group. Then we need a script to install the dependencies and the Kubernetes utilities themselves. #10.98.1.61 Installing Kubernetes dependencies with Ansible # used by ansible to determine which actions to run on which hosts # this is a basic file putting different hosts into categories # setting the home directory for retreiving, saving, and executing filesĮqually as important (and potentially a better starting point than the variables) is defining the hosts. Join_command_location: “join_command.out” # this defines what the join command filename will be # apparently it isn’t too hard to run out of IPs in a /24, so we’re using a /22 # can be basically any private range except for ranges already in use. Initial Ansible Housekeepingįirst we need to specify some variables similar to how we did it with Terraform.Ĭreate a file in your working directory called ansible-vars.yml and put the following into it: # specifying a CIDR for our cluster to use. Today, let us see the steps followed by our Support techs to install Ansible. Our proxmox support is here to offer a lending hand with your queries and issues. We would be very interested to hear from Google or from the community how we can accomplish this.Wondering how to install ansible proxmox kubernetes? Our in-house experts are here to help you out with this article. I've seen it done in many organizations (with plain Kubernetes that is). I think that what we are looking for is not very exceptional. We then get the error that the certificate is only valid for the controlPlaneVIP (and that one other IP address), but not for 127.0.0.1. When running the ssh command to open the tunnel we route 127.0.0.1:7443 to the jumpbox and from there to the Kubernetes API Server (controlPlaneVIP). This certificate only validates against the controlPlaneVIP for the admin cluster and the user cluster (I hope I'm saying this part right :-)). This results in a kubeconfig being generated containing a certificate and a JWT token (among other things). We get redirected to Azure AD where we log in. We log in using gcloud anthos auth login -login-config -cluster. Ok, so I have had a crash course Google Anthos from one of our Engineers and we got part of it working, but it would require end users to add a line to their "hosts" file, which is not the user experience we want to provide (if it is allowed by policy at all). And I'm wondering if we can also configure TinyProxy on our own server and transparently tunnel through that? On GCE there is this "tunnel" method as described here: Set up an SSH tunnel for private browsing using Compute Engine | Google Cloud Platform Community , but for Google Anthos this is not the case.ĭoes the solution you describe also work with a jumpbox on-premises? The article now describes how to do this with a GCE bastion.

I was looking at the solution described here: , but we are concerned that gcloud will overwrite the changes made to the kubeconfig. This breaks their workflow, especially on the Development cluster. IT Security policies require that developer need to log in to a terminal server (or jumpbox or bastion) that resides in Network "A" first before they are allowed to interact with the Kubernetes cluster. We have a Kubernetes cluster created on-premises (with Google Anthos) in Network "A" and our development teams reside in Network "B".

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed